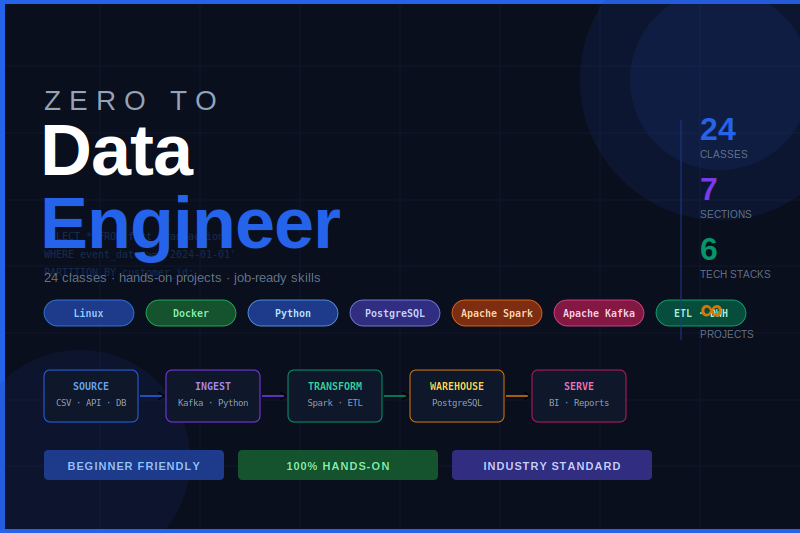

Course Description

This comprehensive course takes you from data engineering fundamentals to building production-grade data pipelines used at top tech companies. You'll master the complete modern data stack — Python, Apache Spark, Kafka, NiFi, Docker, and cloud-native warehousing — through hands-on projects that mirror real-world scenarios.

Whether you're a software developer transitioning into data engineering or an analyst ready to level up, this course gives you the battle-tested skills to design, build, and operate scalable data infrastructure with confidence.

What you'll learn

- Design and implement end-to-end ETL and ELT pipelines from scratch

- Build real-time streaming pipelines with Apache Kafka

- Model and optimize data warehouses using dimensional modeling best practices

- Write clean, production-grade Python for data engineering tasks

- Understand the difference between Lambda and Kappa architecture

- Process massive datasets efficiently using Apache Spark and distributed computing

- Orchestrate complex data workflows and automate data ingestion with Apache NiFi

- Containerize and deploy data pipelines using Docker and Docker Compose

- Apply data quality, monitoring, and observability patterns to your pipelines

- Implement CDC (Change Data Capture) patterns for real-time sync

This course includes:

- 45.00 hours on-demand video

- Assignments

- 1 article

- 1 downloadable resource

- Access on mobile and TV

- Certificate of completion

Course Content

-

What Does a Data Engineer Actually Do?

-

The Modern Data Stack: A Landscape Overview

-

Batch vs Streaming vs Micro-batch: Choosing the Right Pattern

-

Data Pipeline Architecture: Lambda vs Kappa

-

Python Environment Setup: pyenv, venv, and pip

-

Python Data Types and Structures Engineers Must Know

-

File I/O: Reading CSV, JSON, Parquet, and Avro

-

Working with APIs and HTTP Requests

-

Database Connectivity: SQLAlchemy and psycopg2

-

Writing Reusable Pipeline Code with Classes and Modules

-

Error Handling, Logging, and Retry Logic in Pipelines

-

Python Project: Build a File Ingestion Framework

-

Advanced SQL: Window Functions, CTEs, and Subqueries

-

Dimensional Modeling: Star Schema vs Snowflake Schema

-

Slowly Changing Dimensions (SCD): Types 1, 2, and 3

-

Data Vault Modeling: Hubs, Links, and Satellites

-

Indexing Strategies for Analytical Workloads

-

Data Normalization vs Denormalization for Performance

-

Project: Design a Sales Analytics Data Model

-

ETL vs ELT: When to Use Which and Why

-

Extraction Patterns: Full Load, Incremental, and CDC

-

Data Transformation Techniques: Cleaning, Validation, Enrichment

-

Loading Strategies: Upsert, Merge, Insert-Only

-

Idempotency in Pipelines: Building Safe Re-runnable Jobs

-

Data Quality Checks: Schema Validation and Null Handling

-

Pipeline Error Handling and Dead Letter Queues

-

Project: Build a Complete ETL Pipeline for E-Commerce Data

-

Data Warehouse Architecture: OLAP vs OLTP

-

PostgreSQL as a Data Warehouse: Setup and Optimization

-

Cloud Data Warehouses Overview: Snowflake, BigQuery, Redshift

-

Partitioning and Clustering for Query Performance

-

Materialized Views and Aggregation Tables

-

Data Lineage and Metadata Management

-

Project: Build a Data Warehouse for a Retail Business

Requirements

- Basic Programming Knowledge

Student Feedback

None

Review

No reviews yet. Be the first!

Please sign in to write a review.

Instructor

Instructor Instructor

None

Instructor User

PhD in Mathematics | Data Scientist | ML/AI ResearcherBio